The emergence of ChatGPT and similar technologies opens up new opportunities for business expansion for memory manufacturers. Such systems process a large amount of data and require high-speed components for servers. High-bandwidth memory (HBM) is becoming the most sought after, with Samsung Electronics and SK hynix already receiving a surge in orders since the beginning of the year.

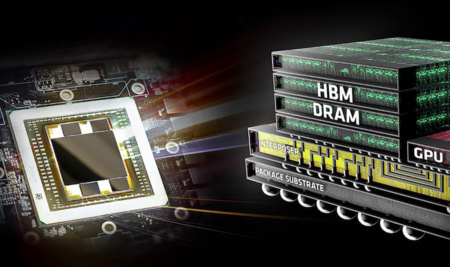

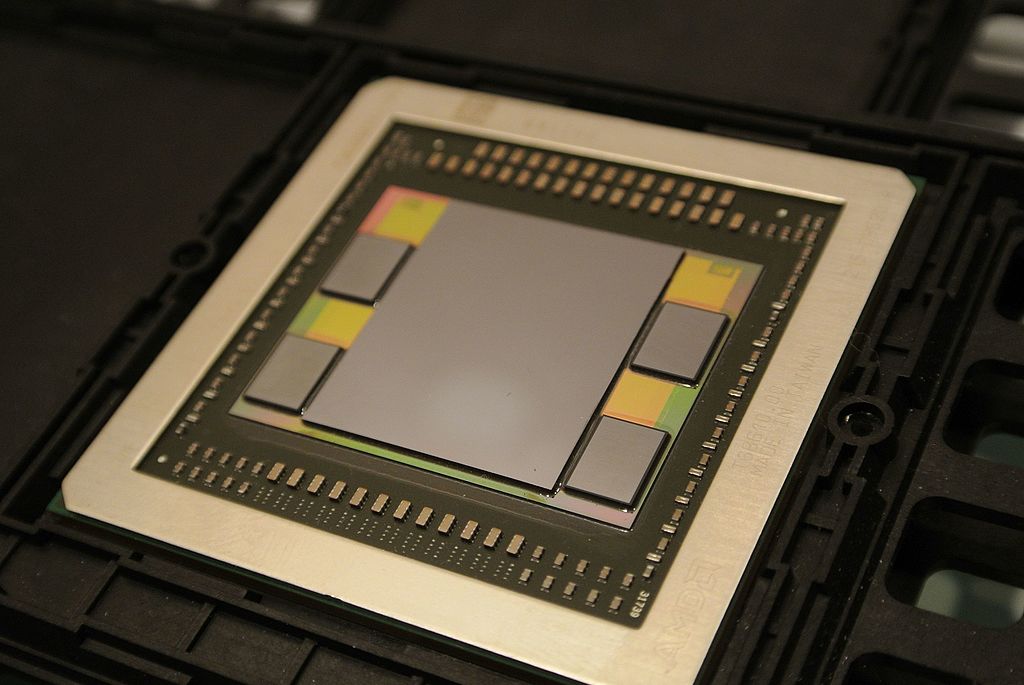

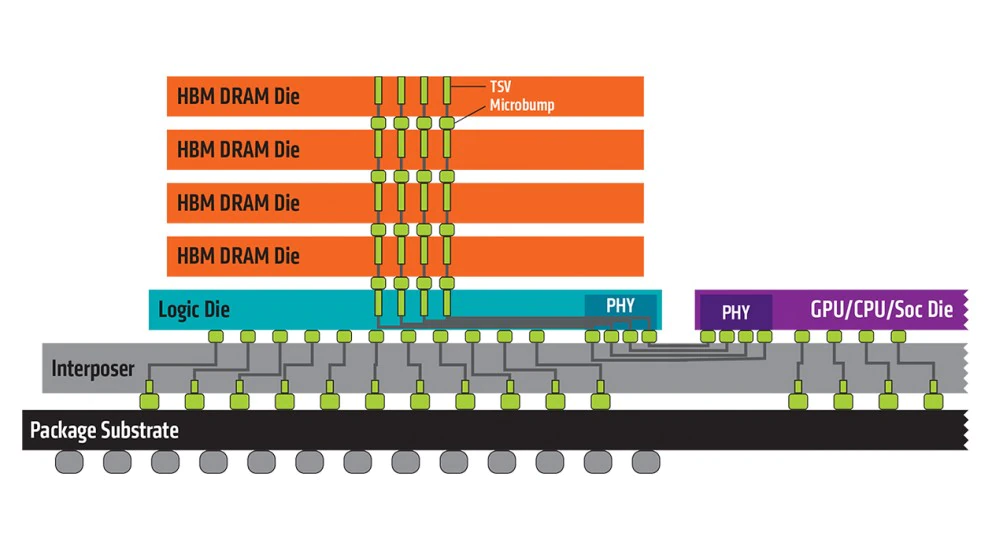

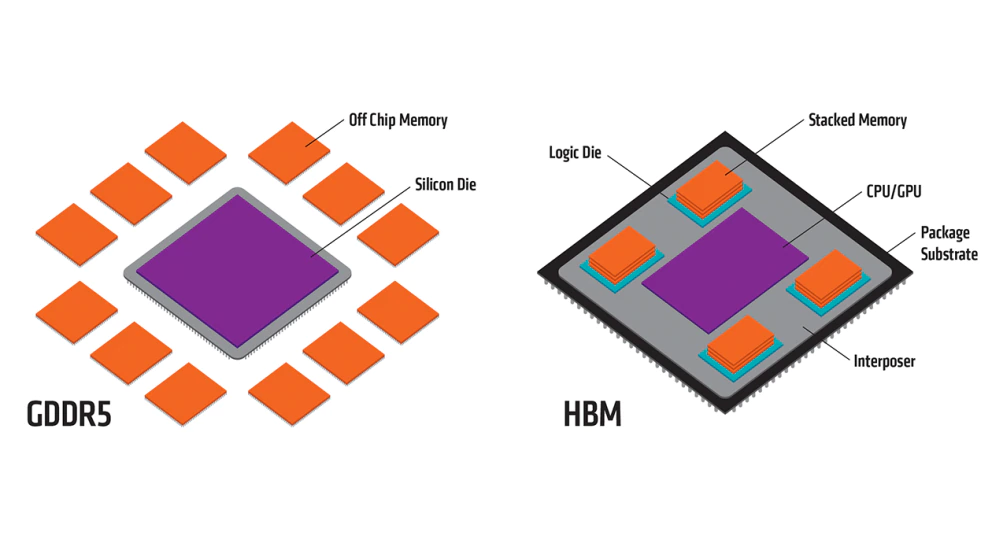

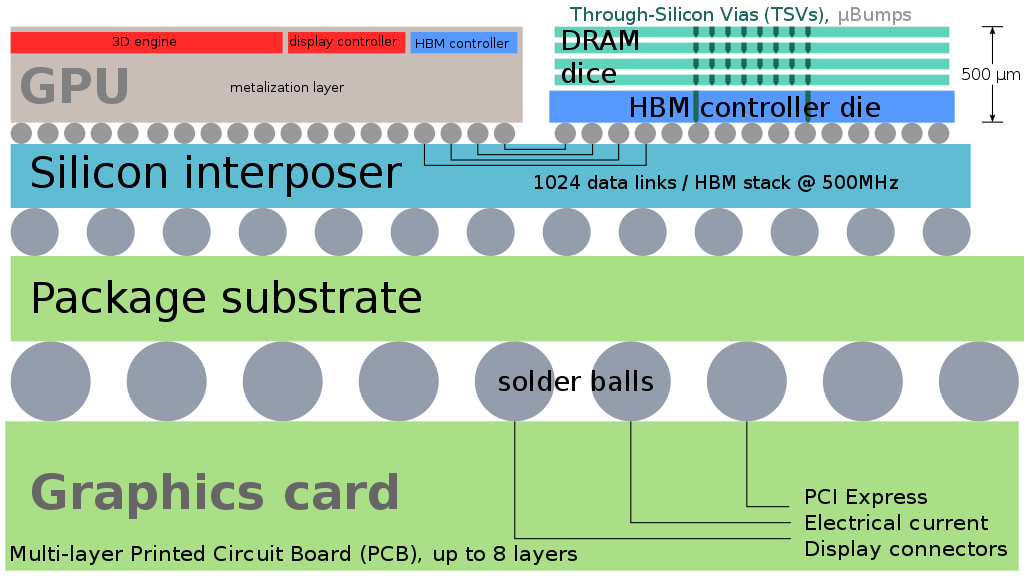

HBM significantly increases the speed of data processing compared to other types of DRAM due to the vertical connection of several memory chips. Until now, despite its excellent characteristics, HBM has been used less than conventional DRAM. This is despite the fact that the average price of HBM is three times higher than that of conventional DRAM. Production of HBM is more complex and technological. However, the development of artificial intelligence technologies is capable of changing the situation.

NVIDIA, the world’s largest manufacturer of graphics processors used in computing, approached SK hynix with a request to supply a new product – HBM3 chips. Intel, the largest manufacturer of server processors, is also actively working on using products equipped with HBM3. Insiders claim that the price of HBM3 has increased fivefold compared to the most productive DRAM.

The market for high-performance memory modules is expected to grow rapidly, and the competition between Samsung and hynix will intensify. The HBM market is still in its infancy, as HBMs have only started to be seriously implemented in AI servers since 2022.

SK Hynix leads the HBM market. The company developed HBM in collaboration with AMD in 2013. The Korean microchip manufacturer released modules of the first generation (HBM), second generation (HBM2), third generation (HBM2E) and fourth generation – HBM3, securing a market share of 60-70%.

In February 2021, Samsung, in cooperation with AMD, developed HBM-PIM, which combines memory chips and artificial intelligence processors into one. When the HBM-PIM chip works with the CPU and GPU, it significantly speeds up calculations. In February 2022, SK Hynix also presented a solution based on PIM technology.

Experts predict that in the medium to long term, the development of memory for artificial intelligence, such as HBM, will make a big difference in the semiconductor industry. A negative trend may be the increase in the cost of equipment.

Micron announced HBMnext – the next generation of high-speed HBM memory

Source: BusinessKorea