Microsoft is developing its own chip, codenamed Athena, which can be used to train large language models and avoid expensive dependence on NVIDIA.

According to a report by The Information, the company has been secretly working on Athena since 2019, and now a joint group of Microsoft and OpenAI employees already have access to the chips and can test how well they work with the latest major language models like GPT-4.

Currently, the key supplier of AI chips is NVIDIA. The latest version of the company’s H100 GPUs sells for more than $40,000 on eBay. OpenAI estimates that more than 30,000 of the earlier NVIDIA A100 chips would be needed to commercialize ChatGPT.

While NVIDIA is scrambling to build as many chips as possible to meet corporate demand, Microsoft is looking for opportunities and ways to save money on AI training. It is reported that the company has accelerated work on Athena – a project to create chips for artificial intelligence of its own production. It is unclear whether Microsoft will ever make these chips available to customers of the Azure cloud service, but for its own projects (in particular, those developed by OpenAI), they will probably be used as early as next year. The company has also developed a roadmap that involves the production of chips of several generations.

Microsoft isn’t calling the upcoming AI chips a direct replacement for NVIDIA processors, but it believes internal efforts could significantly reduce their costs. Currently, the company continues to deploy artificial intelligence features in the Bing search engine, Office applications, GitHub and other proprietary products.

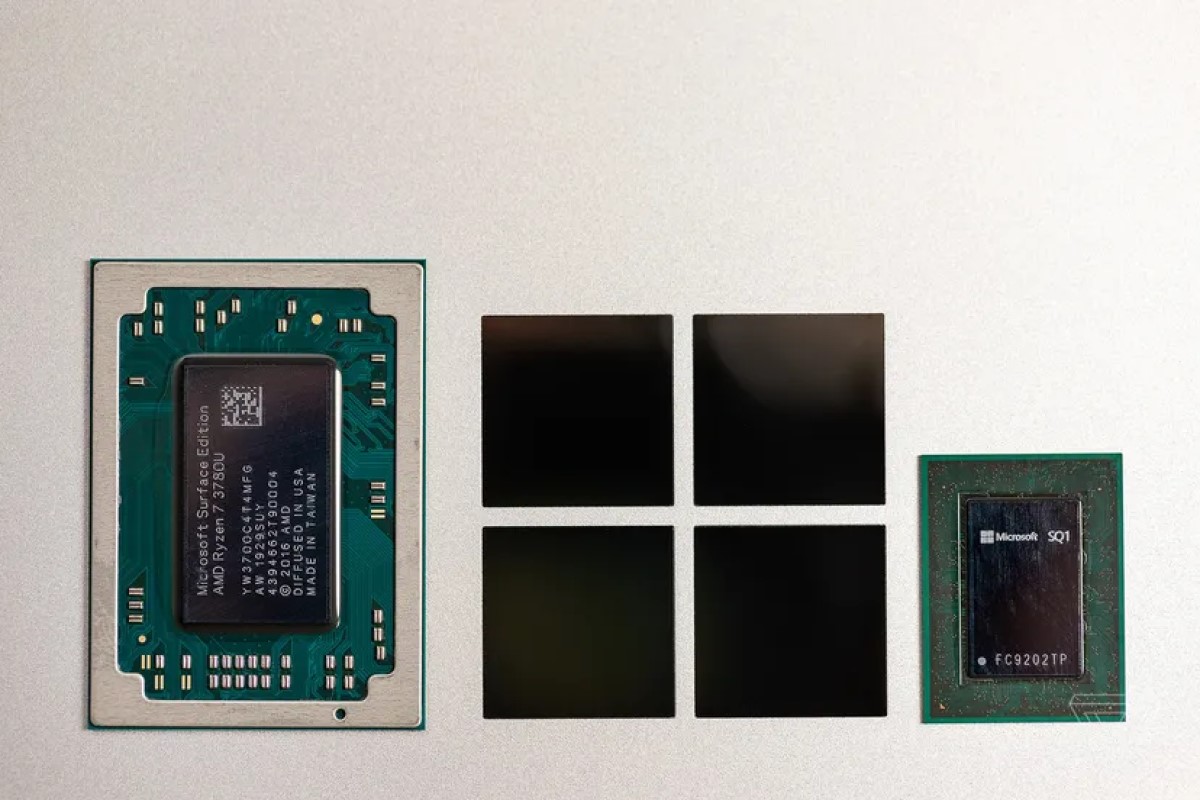

Also in late 2020, Bloomberg reported that Microsoft was considering developing its own ARM-based processors for servers and possibly even the upcoming Surface device. We haven’t seen the finished products yet, but the company has partnered with AMD and Qualcomm to develop custom chips for the Surface Laptop and Surface Pro X devices.

Amazon, Google, and Meta all have their own AI chips, but many companies still rely on NVIDIA processors to run the latest big language models.

Google has been developing and deploying its AI chip called the Tensor Processing Unit (TPU) since 2016. Internally, TPU uses more than 90% of AI training work. In a recent scientific paper, Google revealed that, with the help of its specially designed optical switches, it combined more than 4,000 of these chips in a supercomputer on which the Google PaLM model was trained for 50 days. And these machines, according to the company, are significantly faster and more energy efficient than similar NVIDIA systems.