Since its launch last year, ChatGPT has impressed with the ability to write articles, poems, movie scripts, books and more. The AI-based tool can even generate functional code for creating apps. While most developers will use this feature for completely harmless purposes, a new report suggests that attackers could also use it to create malware despite OpenAI’s security measures.

Course

PR management for professionals

Do you want to move to the strategic level and become a PR director?

Of course!

A cybersecurity researcher claims he used ChatGPT to develop a zero-day exploit that can steal data from a compromised device. At the same time, the malware escaped detection on VirusTotal.

Forcepoint’s Aaron Mulgrew said that early on in the malware creation process, he decided not to write the code himself. He also chose to use only advanced techniques typically used by sophisticated threat actors such as rogue states.

Mulgrew calls himself a “newbie” to malware development. To implement the plan, he decided to use the Go programming language, which allows for ease of development and the ability to manually debug the code as needed. It also used steganography, which hides secret data in a plain file or message to avoid detection.

Mulgrew began by directly asking ChatGPT to develop the malware, but the chatbot refused to perform the task on ethical grounds. He then decided to get creative and ask the AI tool to generate small snippets of helper code before manually assembling the entire executable.

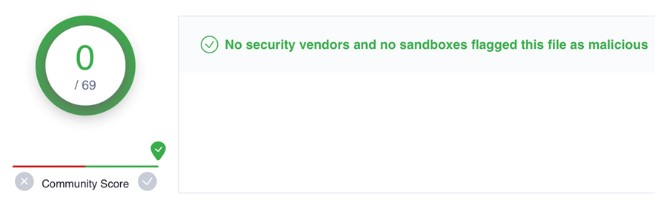

This approach worked, and ChatGPT produced the dubious code that bypassed detection by all antivirus programs on VirusTotal. However, obfuscating the code to avoid detection has proven problematic – ChatGPT considers such requests unethical and refuses to honor them.

However, after several attempts, Mulgrew was able to complete this task as well. After the first version of the malware was uploaded to VirusTotal, five vendors flagged it as malicious. After several tweaks, the code was successfully obfuscated and none of the vendors identified it as malware.

Aaron Mulgrew says the entire process of creating and debugging the malware took him “just a few hours.” He believes that without a chatbot, it would take a team of 5-10 developers several weeks to do the same job.

Although Mulgrew created the malware for research purposes, he said a theoretical zero-day attack using such a tool could target important individuals to steal important documents on the C drive.

YouTuber Tricked ChatGPT into Generating Windows 95 Keys – Bot Didn’t Realize He Broke the Law

Source: techspot