Stability AI, the company behind the AI image generator Stable Diffusion, is now open-sourcing its StableLM language model.

The company has released a set of open-source large language models (LLMs) called StableLM and announced that they are available for developers to use and adapt on GitHub.

Like ChatGPT, StableLM is designed for efficient text and code generation. It is trained on the open-source dataset Pile, which contains information from a number of sources, including Wikipedia, the Stack Exchange multi-topic question-and-answer website network, and the biomedical information retrieval system PubMed.

Stability AI says that StableLM models have 3 to 7 billion parameters so far, with another 15 to 65 billion to come.

The company builds on its mission to make AI tools more accessible, as it did with Stable Diffusion – the image synthesis tool was available in public demo and beta, and a full model download was provided for developer experimentation.

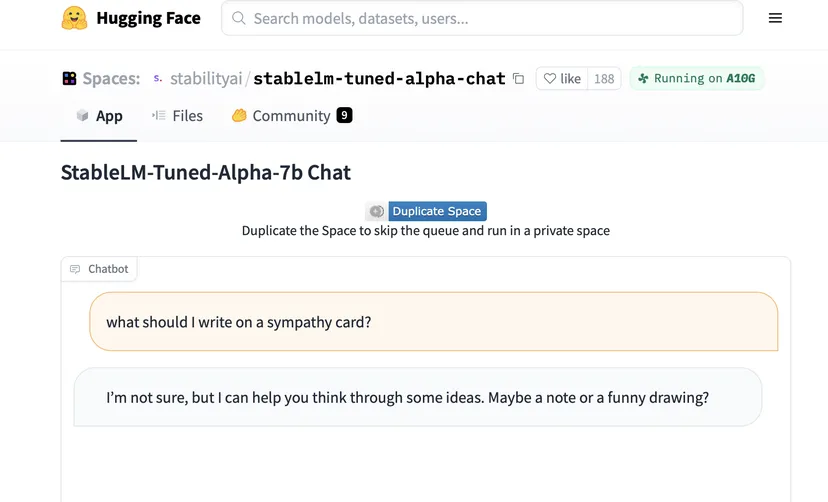

The Stability AI chatbot can be tested in a demo on Hugging Face. Stability AI cautions that while the datasets it uses guide the chatbot to share text more safely, not all biased or toxic responses can be mitigated through fine-tuning.

A neural network in a smartphone. Qualcomm optimized Stable Diffusion for fast performance on Snapdragon 8 Gen 2

During the course you will have access to Cinema 4D software. We took care of everything.

Sign me up

Source: The Verge