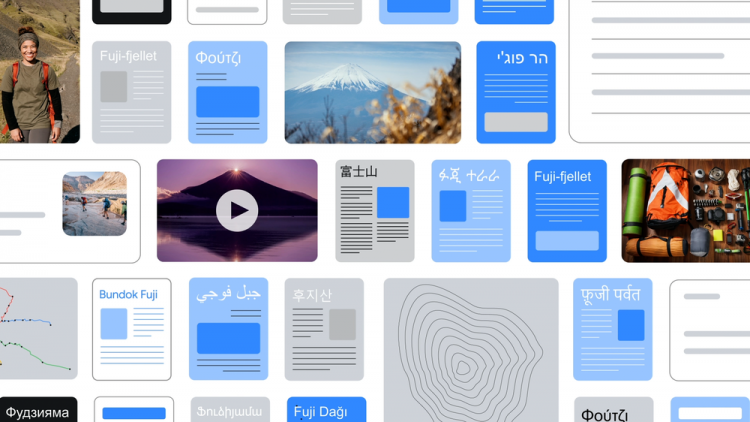

Google announced the development of a multimodal neural network called MUM (Multitask Unified Model). It is designed to improve organic search results and the rest of the company’s products. Artificial intelligence has been trained to work in 75 languages and can combine information from all of them. This will make it easier for users to get the desired result, according to the developer blog.

The creators clarified that MUM runs on the Transformer deep neural network architecture. Unlike the BERT project, the new product is 1000 times more powerful. Due to its multimodality, MUM can form a broader understanding of the surrounding world. She can understand not only text and pictures, but also audio and video files.

For example, when requesting “Trek to Mount Fujiyama”, MUM will provide information on the most comfortable routes. In addition, the search can understand that in the context of hiking, the user may need special equipment, and will suggest it. Due to a deeper knowledge of the surrounding world, the neural network can take into account the weather. For example, the rainy season begins on the mountain in the fall, so the AI may suggest buying a waterproof jacket. You can also take a picture of an object and ask the search engine if it is suitable for the purpose.

The timing of the launch of MUM has not yet been specified. According to the developers, they have just started testing the neural network. Regardless, they plan to start integrating it in the coming months.

If you notice an error, select it with the mouse and press CTRL + ENTER.