On May 14, Nvidia gave an introductory presentation to GTC. GTC, or GPU Technology Conference, is a specialist event, there are no consumer products. At GTC 2020, they talked about smart cars, servers, data centers and artificial intelligence. Let’s see where the graphics industry is heading. I would like to highlight a number of interesting points.

Content

- Data center as a computer unit

- Artificial intelligence in graphics creation

- About high-performance computers

- Nvidia’s recommendation system

- Jarvis is a communicative artificial intelligence

- Two news we will miss: Nvidia EGX A100 and EGX Jetson Xavier NX

- Nvidia Ampere in smart cars

- Conclusion

Data center as a computer unit

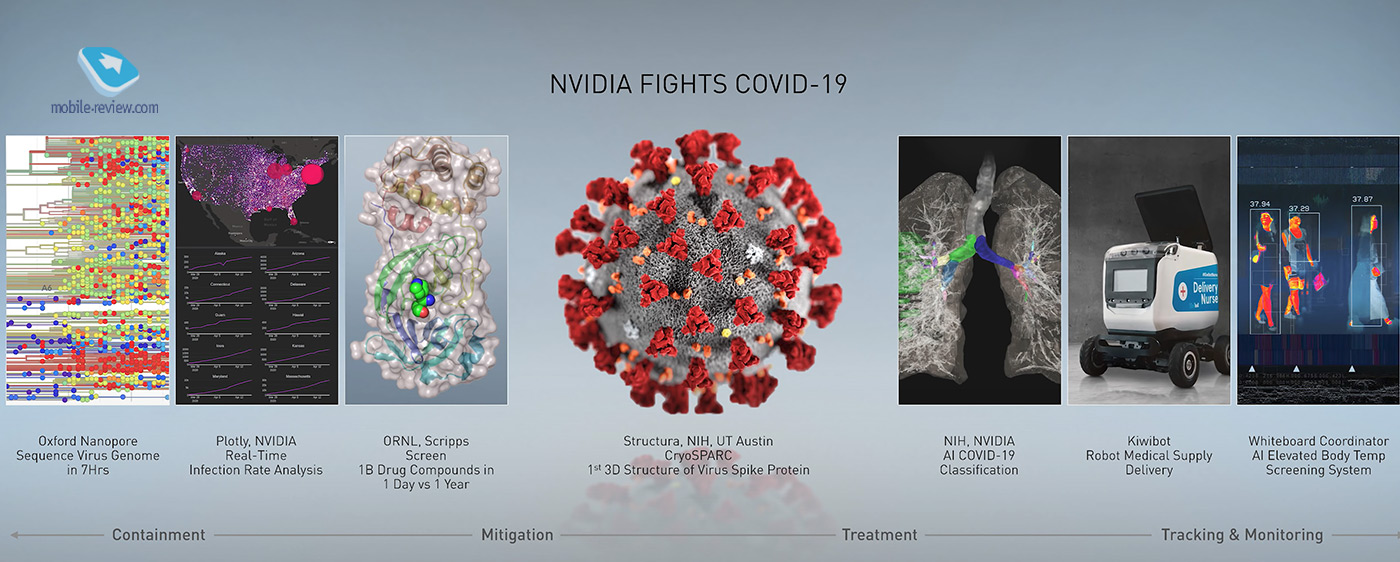

But let’s start with the coronavirus. This topic could not be ignored. Nvidia’s solutions help in everything from decoding genomes to being used in medical bots.

By the way, in their presentation, Nvidia showed a model of the University of Austin, but I will assume that the Russian company Visual Science also used graphics from Nvidia to create its own detailed model of the SARS-CoV-2 virus.

Nvidia CEO Jen-Hsun Huang shared his vision for the industry. According to him, there are two main forces driving the development of computer computing in recent years. In the first place is machine learning, that is, building a new one based on already known data.

The second driver is an increase in the complexity of tasks and, accordingly, an increase in the size of applications, when already a computer alone cannot cope. This has led to an increase in the number of data centers. According to Mr. Huang, we are moving towards the fact that not a computer or a server, but a whole data center will be taken as one computing unit. Accordingly, the industry faces a new problem of how to create, transfer, store and optimize data so that it can be used as efficiently as possible at the data center level.

Probably, from this prediction along the way, one can draw a consumer conclusion that the game streaming model, when powerful hardware for playing the game is somewhere in the cloud, will become the main type of gaming for the majority of the population.

To speed up data delivery (i.e. networking), Nvidia bought Mellanox. This appears to have been the biggest deal in Nvidia’s history. Mellanox manufactures equipment for high-speed networks with a focus on supercomputers and big data data centers.

Artificial intelligence in graphics creation

Mr. Huang started from afar. From the story of how 40 years ago one of the company’s employees described the model of the behavior of light on an object.

The ball rolls, the surface is reflected in it, the light plays

And only 38 years later, namely in 2018, Nvidia was able to present the mass implementation of the model in the form of new generation GeForce RTX cards with two innovative features: real-time ray tracing and artificial intelligence aimed at completing and improving the picture.

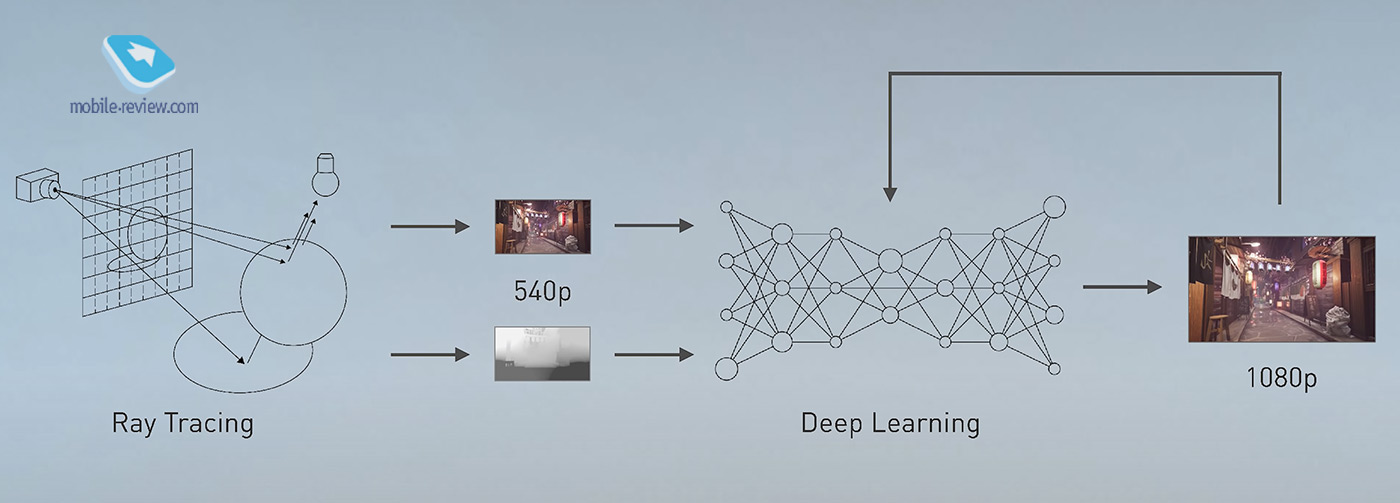

According to Mr. Huang, even after so long since the creation of the model, the power of video cards for ray tracing was not enough, so machine learning came to the rescue. In recent years, Nvidia has been actively training its AI. He is given a picture of 540p, and his goal is to synthesize a picture in Full HD based on what he has learned.

This is perhaps the most exciting part of the entire presentation. For training, Nvidia made a 16K model and ran the AI ”trillions of times” through it. And then the trained AI is delivered to user computers in the form of drivers. This technology is called DLSS, or Deep Learning Super Sampling, that is, deep learning on super samples (still not super, when the original model is 16K).

Our great site is desperately pressing all the pictures, so under this paragraph I will leave a link to a video with the timing of this place. Better to watch in 4K!

So, first the picture was shown in the original 16K.

Then they showed how a video card can display it at 720p by itself.

They showed how the first version of DLSS 1.0 improved the picture by taking a 720p source and trying to “stretch” it. It should be noted right away that DLSS 1.0 did not fly, since to train the AI for each task (game), it was required to create a separate training ground. And this is not an easy and expensive task, so the developers simply sabotaged DLSS 1.0.

But Nvidia believed in success. About a month ago, the company introduced DLSS 2.0. This technology is likely to succeed as it is more viable. You no longer need to create a separate training ground for each game. But the great thing is that DLSS 2.0 allows you to get better image quality than the Full HD picture that the card can display on its own.

The point is that the video card simply renders the image based on its capabilities. And DLSS 2.0 knows (or thinks it knows) what the original image looks like in super-high resolution, so the AI completes even what does not exist. And, perhaps, this is the main advantage, why you should buy RTX and not GTX cards. There are no so-called Tensor Cores in GTX – these are separate processors for artificial intelligence.

Video from mark: 2.35:

For example, such a performance gain is provided by DLSS 2.0 technology in Minecraft.

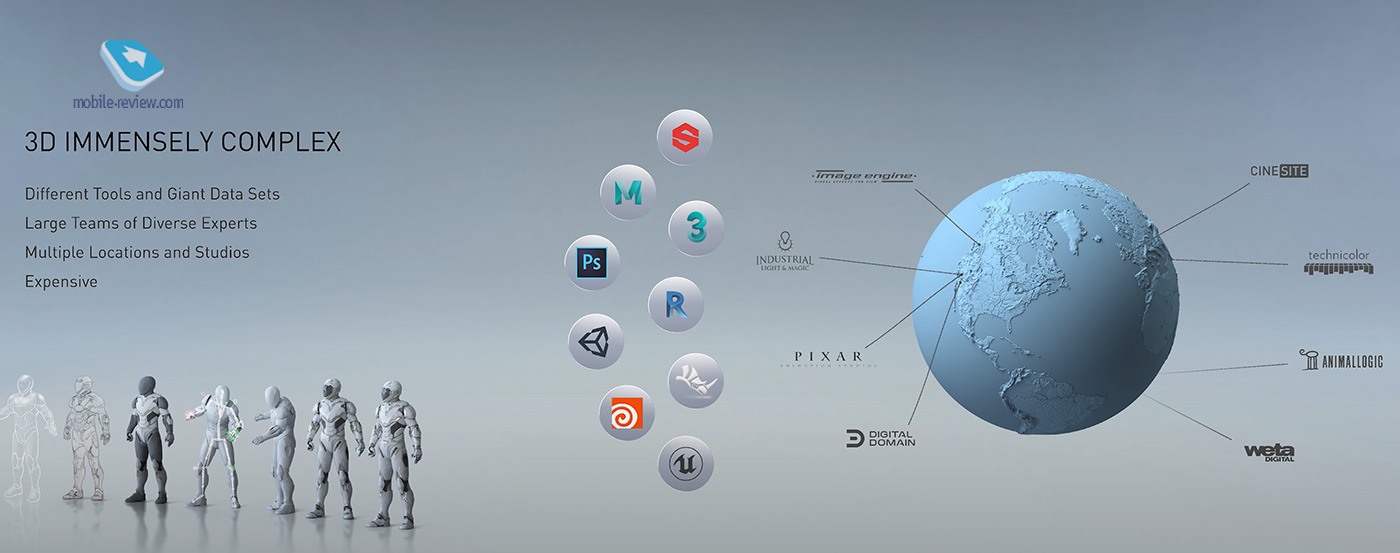

Well, along the way, Nvidia boasted that all the cool guys work with its graphics. Like take a bite, AMD! Here, of course, it’s worth noting that Nvidia is long overdue to bury the hatchet and make friends with Apple again, as many in the film industry use the Mac Pro to render graphics. Although everything is relative, for example, the picture shows the Pixar studio, which, as it were, historically uses Apple products, but apparently does not forget about Nvidia either.

But in general, the story was presented under the sauce that Nvidia’s tools allow several users to simultaneously work on 3D models at once. And all this is possible with the help of a new server, studded with a bunch of RTX cards. Buy, stick in the cards, and go! In fact, a super hit, especially in conditions of self-isolation, and indeed. A designer is sitting somewhere in Hawaii and is making a new cartoon from Pixar with other dudes around the world. Only high-speed internet is needed.

For example, a roller with a ball. It is notable for lighting, shadows, natural physics, and the fact that it was made together by designers and engineers across the country.

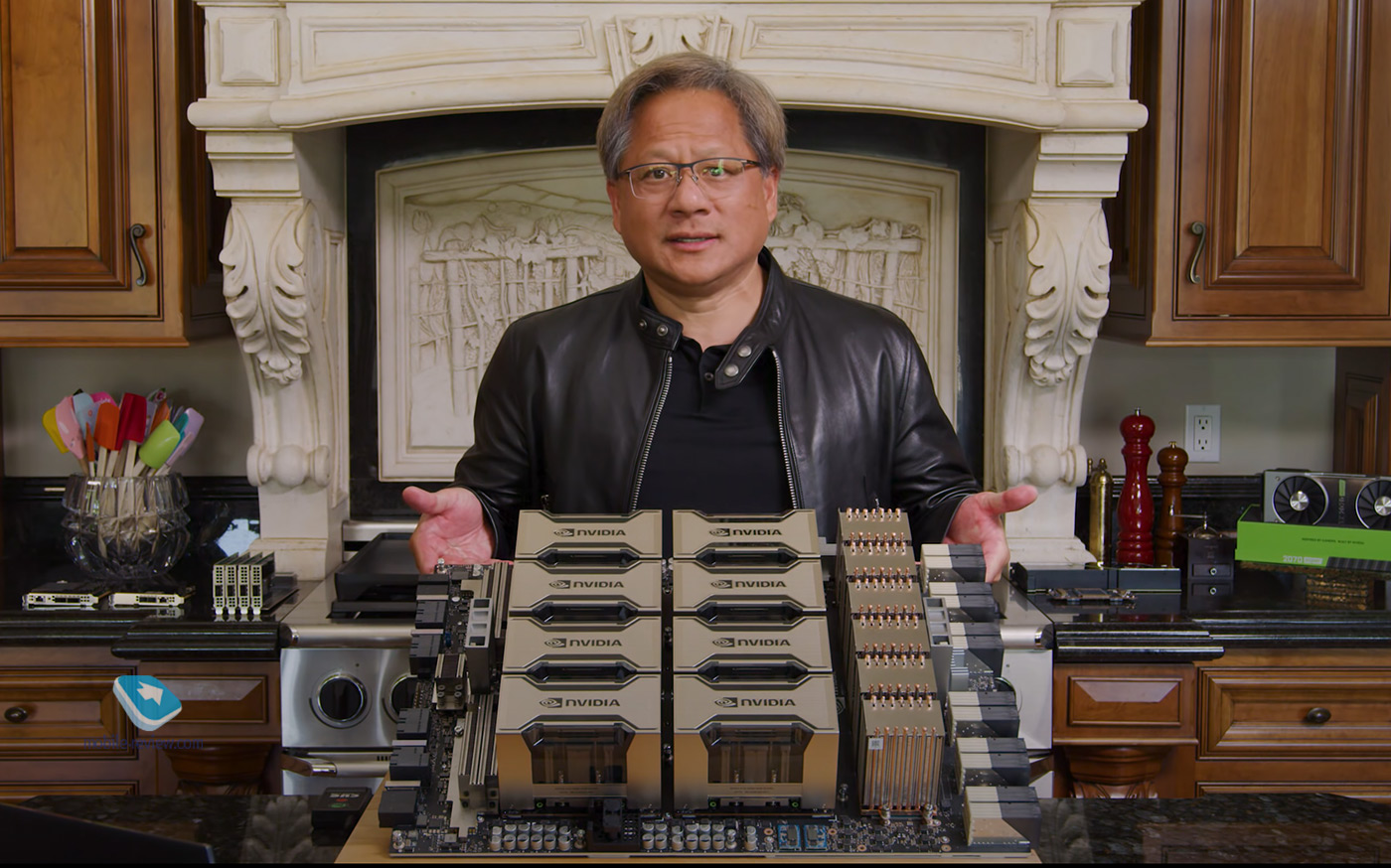

About high-performance computers

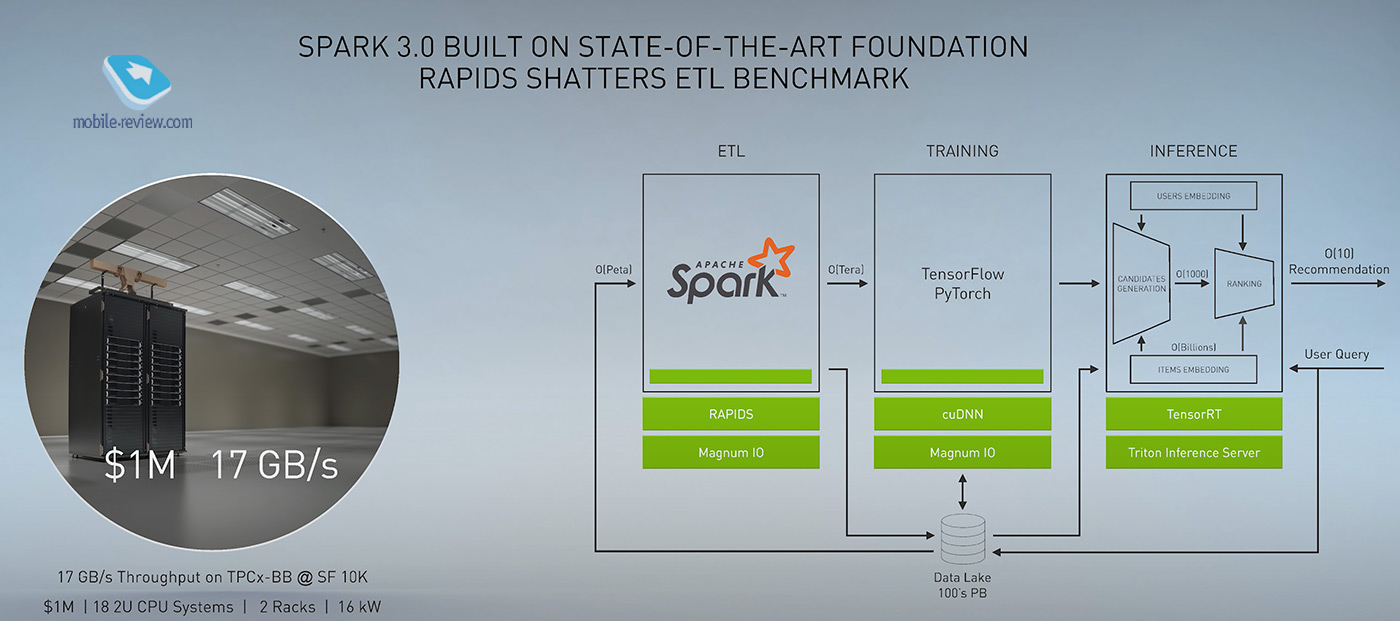

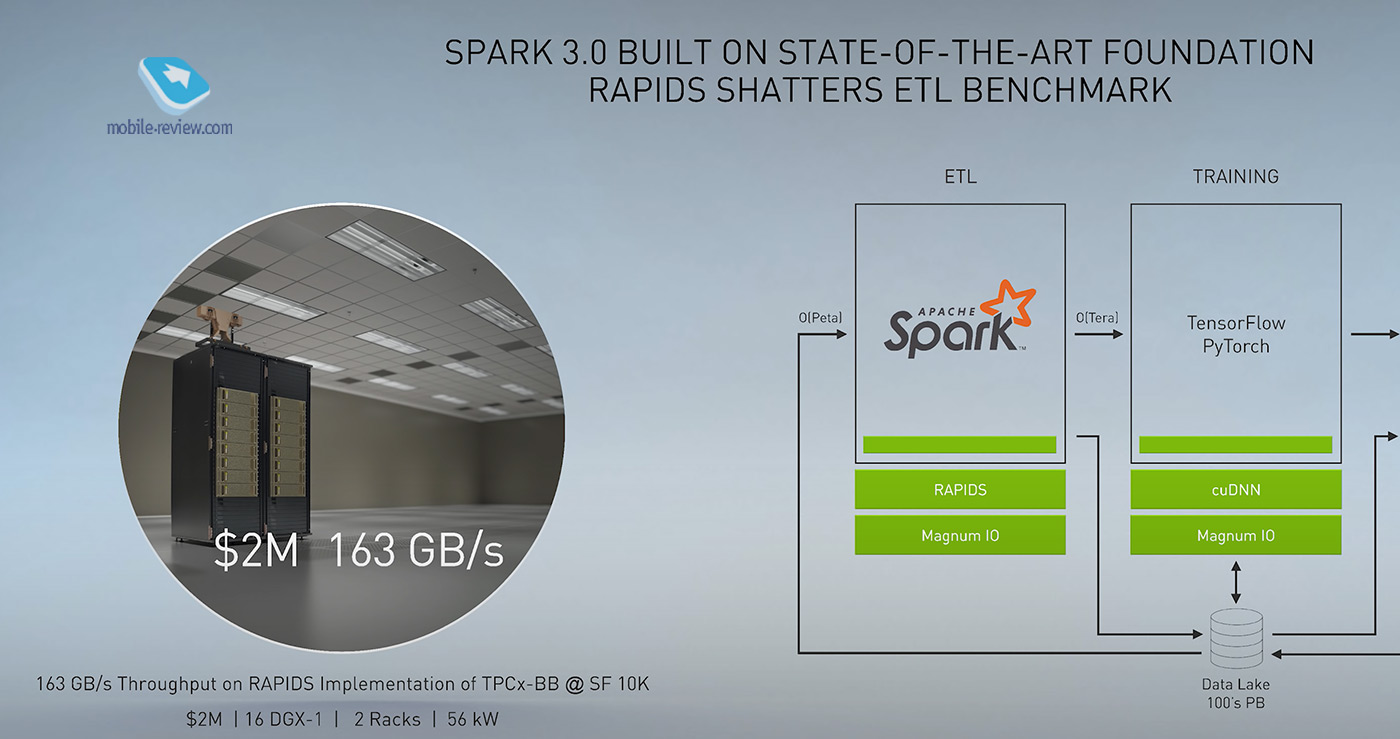

In the field of high-performance computers, Mr. Huang used to say boring things for the average consumer. In short, Spark, or Apache Spark, is an analytical engine for working with big data. And so Nvidia says: what if our cool developments are used to speed up data processing?

For example, suppose Spark uses video card processors and video card memory to process data! And also let Spark plan and divide the work so that everything is counted everywhere and at the same time – both in the processor and in the video card! And let’s make a custom library that Spark can access. And let’s call it all Spark 3.0. So they did there.

As a result, everything started to work much faster. As proof, they showed a certain superserver from Dell, which, in addition to a bunch of high-speed characteristics, costs a million dollars and consumes 16 kW! And it gives out a data processing speed of 17 Gb / s. And if you make a Spark 3.0 superserver with spare parts from Nvidia, then it will cost $ 2 million, but the data processing speed will be 163 GB / s. And all big data scientists and processors should be thrilled, because it’s only twice as expensive but 10 times as productive. Well, for a data center you need at least a dozen of these cabinets.

And at the end, Jensen Huang threw up his hands and said: “The more you buy, the more you save!”

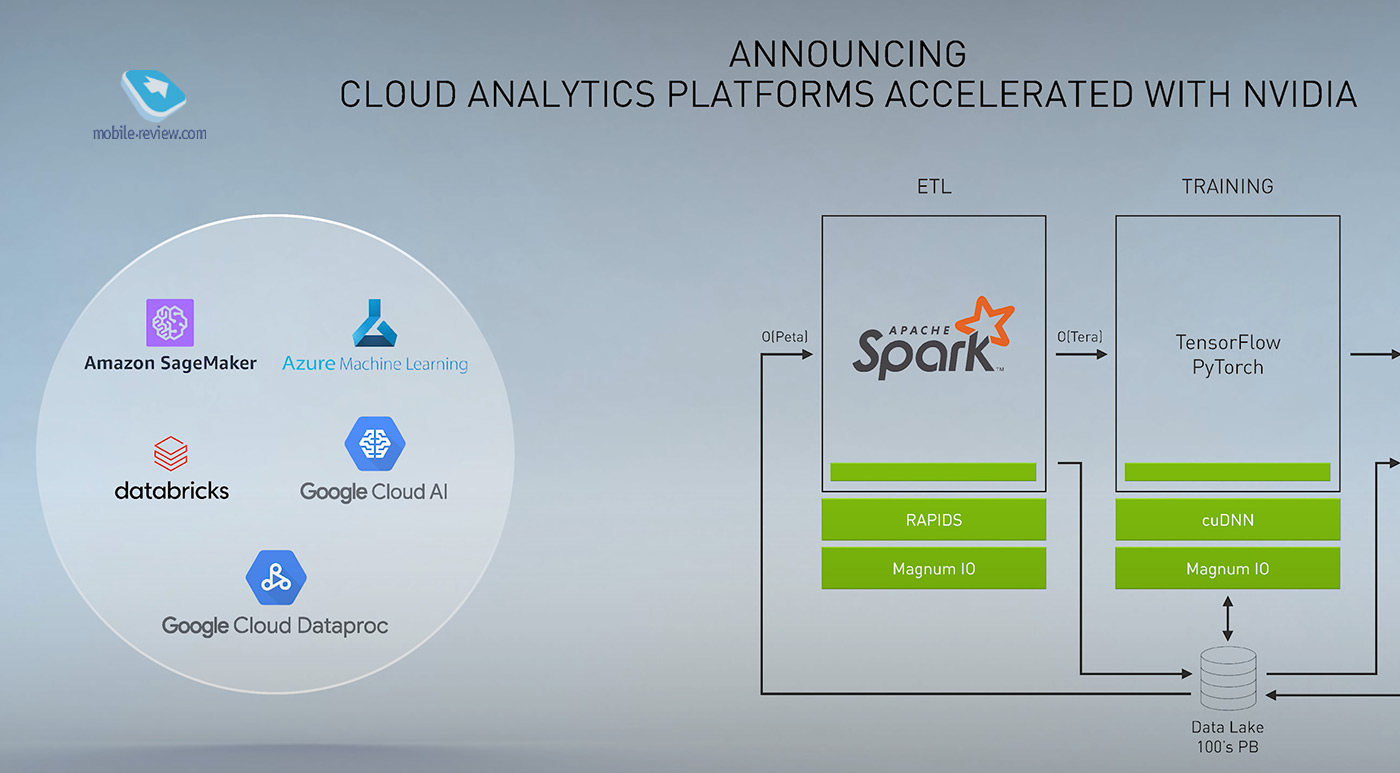

On this, I think, the discussion of this topic can be ended. For the thing, of course, is a good thing, but I’m not sure what I can afford right now. Although, of course, one or another locker for the future will need to be bought. Until then, let Google and Microsoft test the new technology.

Nvidia’s recommendation system

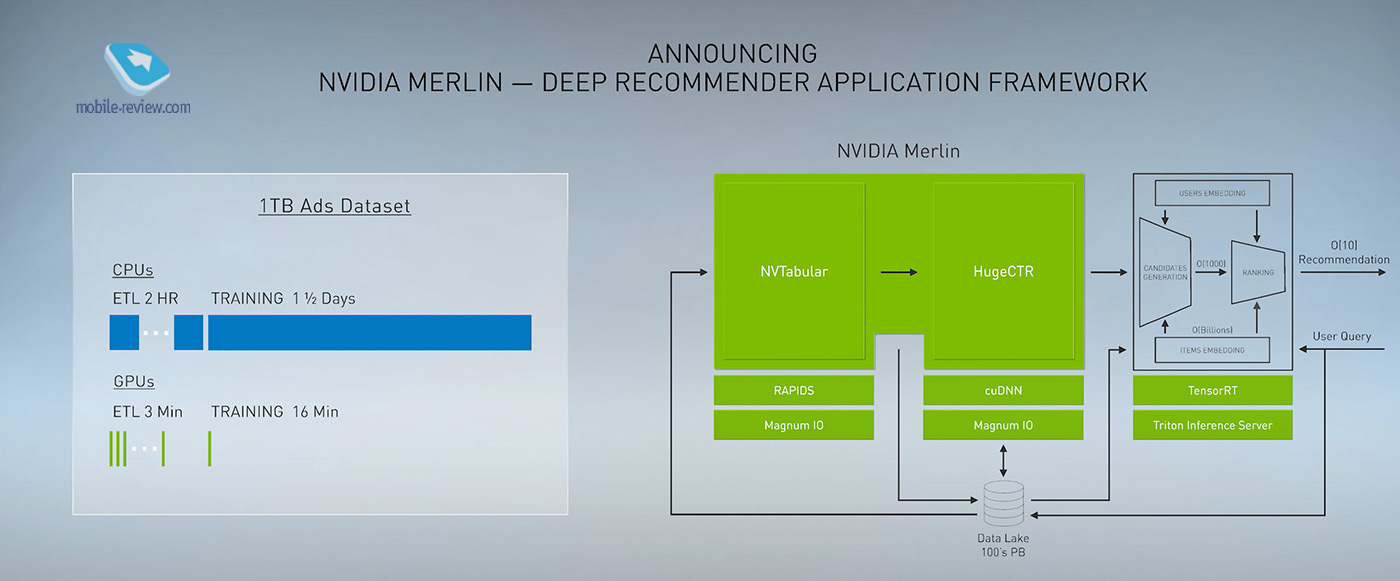

Another technology that an ordinary user will not use, but which he will definitely encounter. Nvidia has introduced the Merlin recommendation system that works for everything – movies and music, clothes.

Although the topic of recommendations may seem boring, it is super important. Just remember how suddenly Yandex.Music took off after Yandex introduced a new recommendation system. The service has turned from an ugly duckling into a candy!

Each recommendation service is a complex system, where each user is passed through filters, and the system tries to choose the most appropriate from the billions of options. All of this requires both computing power and artificial intelligence. Nvidia, they said, will make this technology available to the masses. The company has unveiled the Nvidia Merlin. It is a framework, that is, a software infrastructure for creating recommender systems. Merlin dramatically speeds up the learning curve for predictive AI. As an example, they cited the fact that a typical 1TB system of some data takes 1.5 days to train, and Merlin can handle it in 16 minutes. Well, in order for all this to work, you need, of course, RTX servers with Tensor processors. Imagine how Yandex Music will become even prettier if Yandex has enough money to buy it. For although it was said about democratization, the price was not named.

Jarvis is a communicative artificial intelligence

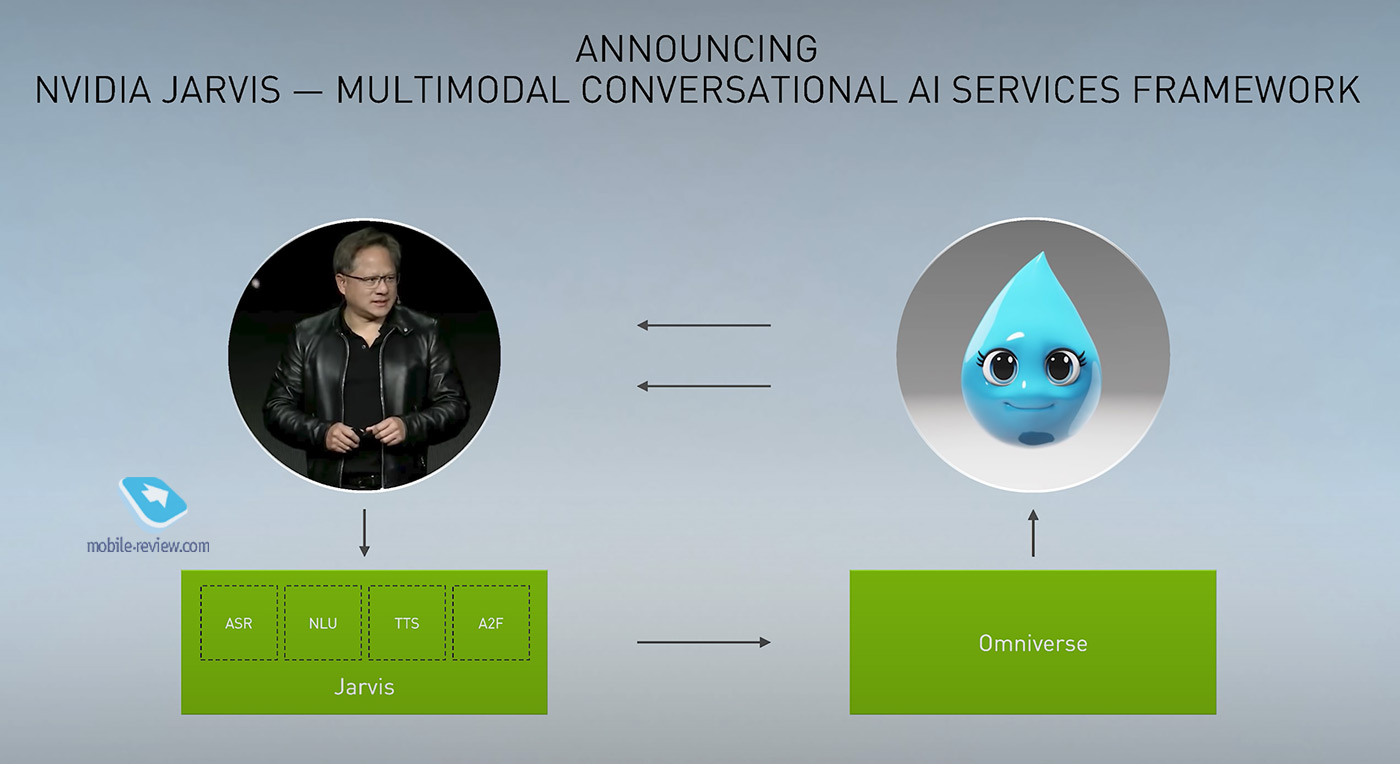

Probably, Nvidia thought: since we are so cool and make products that can dramatically speed up the analysis, selection and prediction of the behavior of objects, why not offer a solution for conversational artificial intelligence. In fact, this type of AI is faced with an abundance of complex tasks that require significant computing power – it is necessary to recognize spoken speech, guess what the user means, find it, then try to answer him naturally and clearly. Despite the fact that there are already quite a lot of conversational assistants, they know little and are significantly limited. Nvidia invented Jarvis. This is not a specific voice assistant, but only a framework that, for example, can be used by Yandex to make Alice smarter. Jarvis will be sold already with a set of behaviors, which should simplify implementation and further training.

And the slide below is just an example that Nvidia made with Omniverse. The demo bot not only responds, but it even has muzzle expressions. The bot was trained to answer questions about the weather. And in the context of the coldest city in the world, they mentioned Yakutsk. This is how Russia was marked at the presentation of GTC 2020. We are proud!

And you can see how the chatbot works in the video here:

Two news we will miss: Nvidia EGX A100 and EGX Jetson Xavier NX

The A100 is a data center GPU. If we come across these solutions, we are unlikely to know, since the A100 can, for example, control hundreds of cameras in airports, while the EGX Jetson Xavier NX is suitable for controlling multiple cameras in small stores.

As Mr. Huang described what is happening, “The NVIDIA EGX Edge AI platform turns a standard server into a small, secure, AI-powered cloud data center. With NVIDIA AI frameworks, companies can create a variety of AI services — from smart retail and robotic factories to automated call centers. ”

All of this is based on the new NVIDIA Ampere architecture. To quote the official press release:

NVIDIA Ampere Architecture – the 8th NVIDIA GPU architecture – delivers the largest performance gains for a wide range of demanding tasks, including AI inference and 5G applications at the edge. This enables the EGX A100 to process large amounts of real-time data from cameras and other IoT sensors for fast insight and increased business efficiency.

With the NVIDIA Mellanox ConnectX-6 Dx NIC, the EGX A100 can receive up to 200 Gbps of data and send it straight to GPU memory for AI or 5G signal processing. With NVIDIA Mellanox Time-Driven Telecom (5T for 5G) technology, the cloud-based, software-defined accelerator EGX A100 is capable of handling most latency-sensitive 5G tasks. This creates a complete AI / 5G platform for real-time decision-making directly in the field – shops, hospitals and factories.

“Together with NVIDIA, we are building a high-performance virtualized 5G radio access network and an accelerated 5G packet switching network,” said Pardeep Kohli, president and CEO of Mavenir. “This will enable us to deliver a wide range of GPU-accelerated 5G services, from AI and machine learning to augmented and virtual reality.”

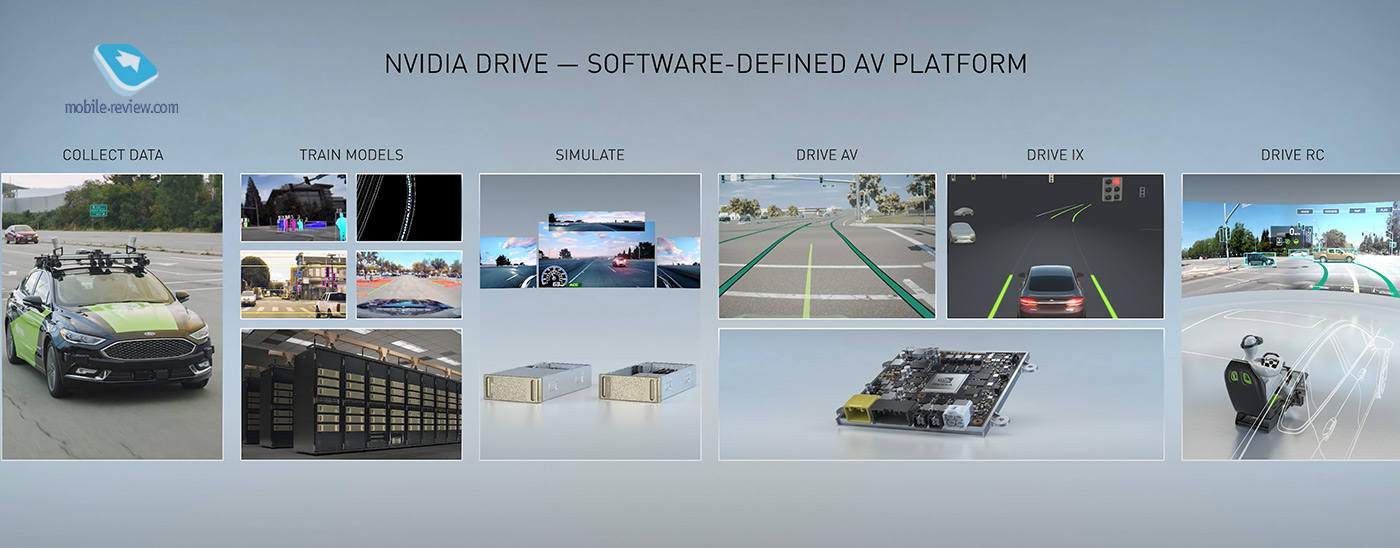

Nvidia Ampere in smart cars

Nvidia Ampere can be used in smart cars. The trick is that, relatively speaking, you only need this board in the picture. All calculations will take place in it, there is also a smart trained AI.

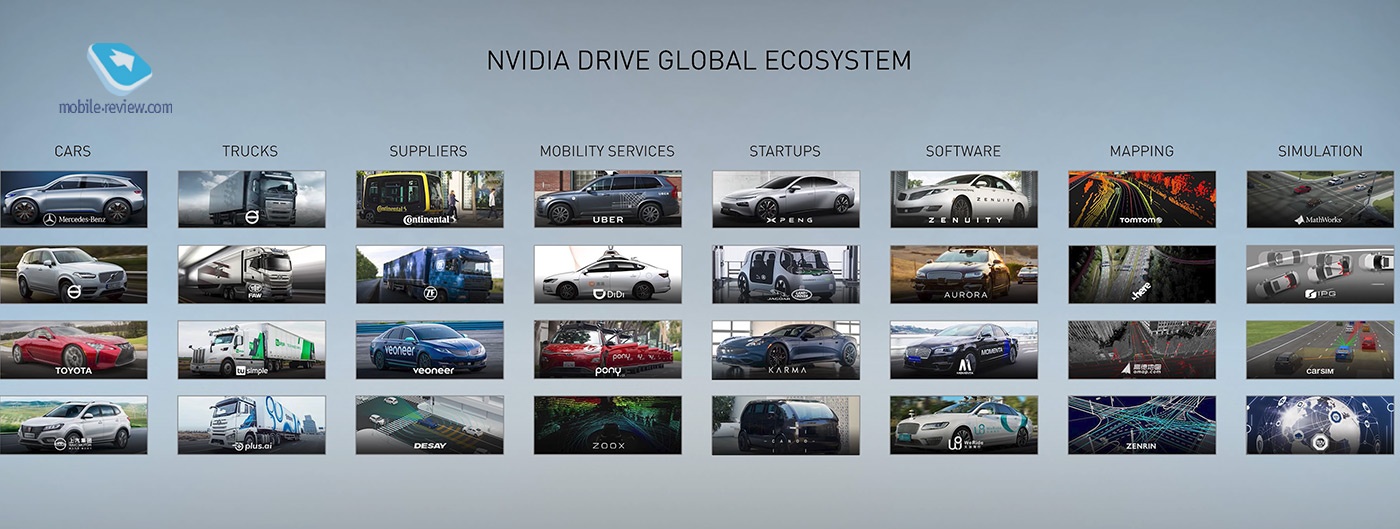

Nvidia’s smart car platform is open source, so it is enjoyed globally. Mostly Chinese and Hindus. More Mercedes and Toyota. Where is Tesla?

Conclusion

Nvidia strides briskly forward. I guess you, like me, are primarily interested in consumer items like DLSS 2.0. But in general, everything presented by Nvidia is designed for business users. Even DLSS 2.0 should reduce the load on streaming data centers. It seems that while Microsoft has opened up Azure and the clouds as a new source of growth, Nvidia is betting on rethinking the computing and artificial intelligence market. The only weak point may look like that the company still prefers to create hardware and infrastructure, which will already be configured by other companies, build data centers, and then sell computing capacity.

Back to content >>>

Share:

we are in social networks:

Anything to add ?! Write … eldar@mobile-review.com