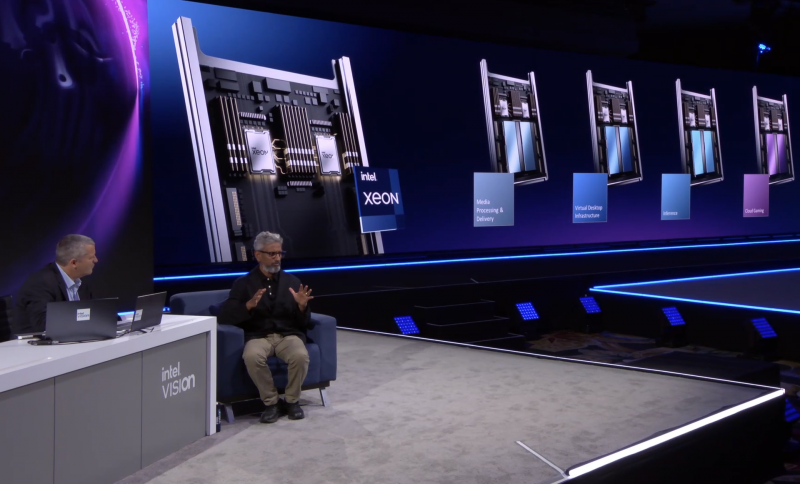

At the Intel Vision event, Intel unveiled server accelerators based on the X e architecture, codenamed Arctic Sound-M (ATS-M). These are fairly versatile GPUs that are suitable for cloud gaming platforms, media content providers, virtual desktops, inference systems, and video analytics. Accelerators are optimized for low total cost of ownership (TCO). You can wait for the appearance of new products on the market in the third quarter of 2022.

Images: Intel

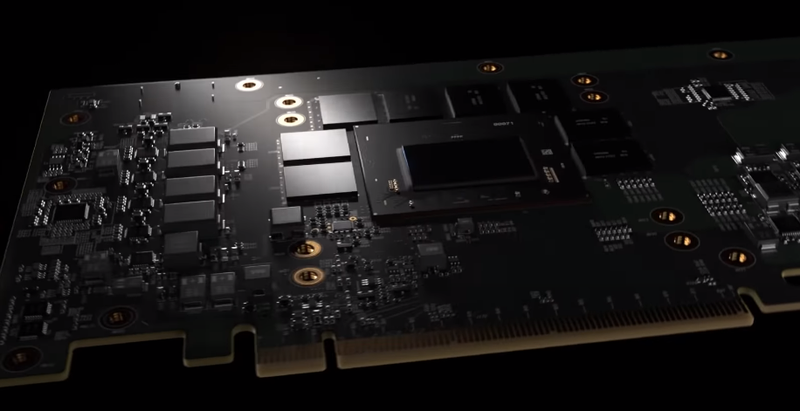

At the time of the announcement, the new series includes two accelerators: a full-size version with 32 Xe cores and a 150W TDP and a compact low-profile one with 16 Xe cores, but with a TDP of only 75W. Both cards have a PCIe 4.0 x16 interface. Each of the variants has on board four branded video engines X e , for the first time in the industry supporting hardware encoding of the video stream in the AV1 format. Additionally, the new accelerators have ray tracing acceleration units and Intel XMX matrix computing units. GDDR6 is used as on-board memory.

One ATS-M accelerator can:

- Develop up to 150 Tops in inference mode;

- Transcode over 30 FullHD video streams or eight – 4K;

- Broadcast over 40 cloud gaming sessions;

- Flexibly distribute the load between multiple VDI sessions.

The hardware AV1 encoder deserves special mention – the new standard, while maintaining the level of image quality, allows you to reduce the bitrate by almost a third compared to H.264, which means that you can either reduce the requirements for the channel width or fit more video streams into it. At the same time, Intel focuses on open standards. The oneAPI/oneVPL project will support all modern video compression formats (AV1, AVC, HEVC and VP9) and popular FFmpeg and GStreamer frameworks. There are also open source sets of Open Visual Cloud.

As for the organization of virtual working environments (VDI/DaaS), ATS-M offers flexible management of resource allocation between multiple vGPUs, and with a high level of granularity. The company also separately notes that the use of SR-IOV hardware capabilities is free and does not require additional licensing – this stone seems to be aimed at NVIDIA.

New accelerators are also suitable for inference systems, especially for AI video analytics, since thanks to new video engines, the stage of processing the incoming video stream will not become a bottleneck. To work with the accelerator, Intel offers openVINO and oneDNN kits that are compatible with TensorFlow and PyTorch.

Source: